A Developer’s Guide to LLMs: Core Concepts and Implementation

A complete guide to integrating LLMs into your products and projects.

Large Language Models (LLMs) are at the forefront of the AI boom in recent years. As a developer, it is more important than ever to understand how LLMs work and to effectively integrate these into our systems. In this blog post, I will guide you through the core concepts relating to LLMs and going through some major methods of LLM inference.

All code used in the blog post can be found in the GitHub repository: mukulboro/llm-aio

Disclaimer

Introduction

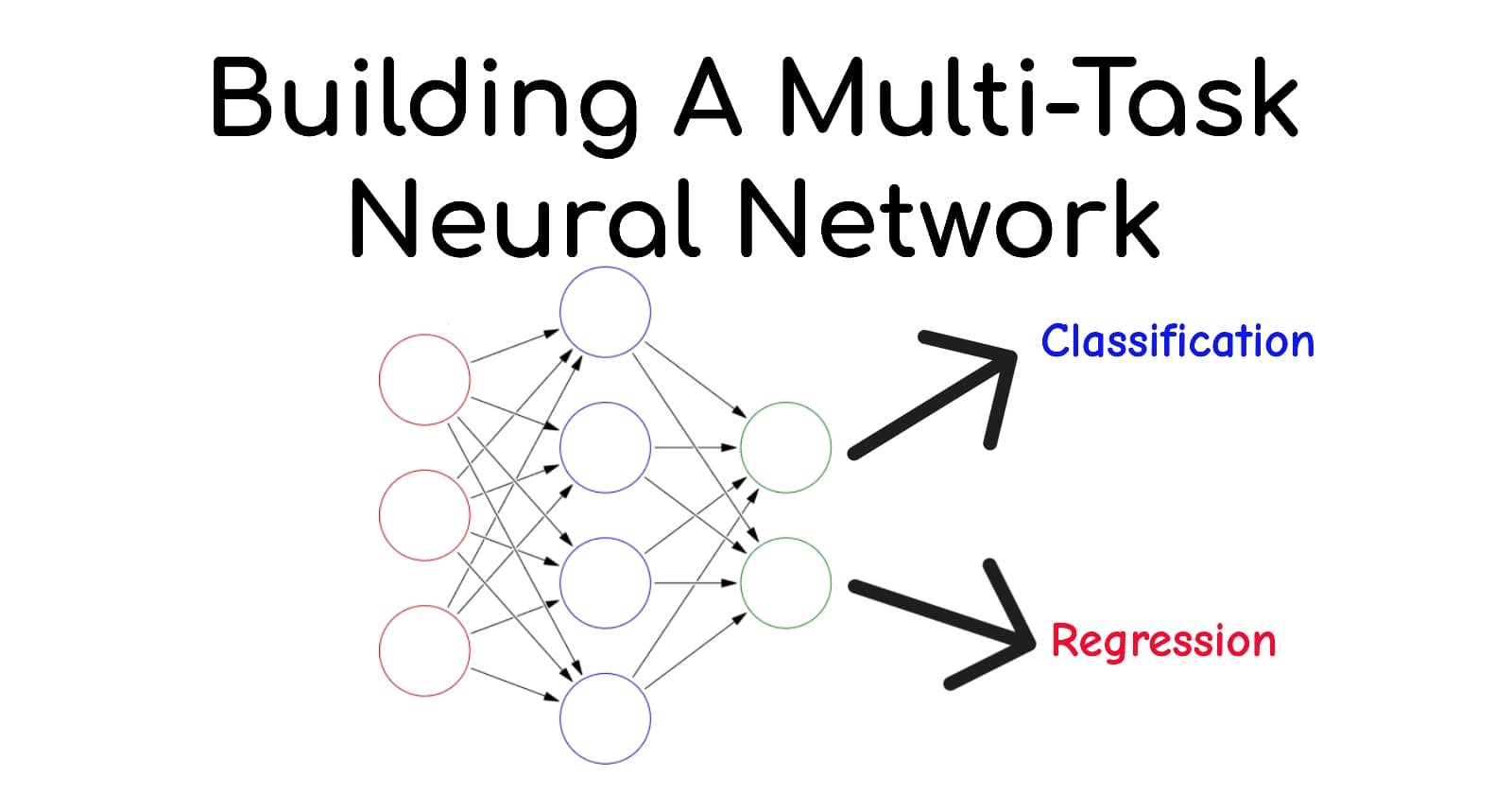

The deep learning world has been familiar with language models for decades now. Language models used to be RNN or LSTM based in the past, but as of now language models refer to special Transformer based neural networks that have the ability to understand language patterns and make predictions based on that knowledge. The large amount of data that is fed into these systems for training is what makes these language models “large“.

Core Concepts

If you are to build systems centered around these LLMs, you need to understand some core concepts:

Tokens

Tokens are essentially the units of language the LLM processes. For easier understanding, we can think of tokens as individual words but this is hardly ever the case in the real world.

When working with LLMs, tokens are one of major factors that determine the latency and cost of your system since inference providers tend to bill you based on the input and output token count.Context Size

LLMs are not magic. They have a specific amount of information they can “remember“ and reference when making predictions. The context size is often described in terms of the maximum number of tokens.

When developing LLM based systems, it if very important to optimize the context you feed to the model. If the context get’s too large, this leads to the dilution of important information and the model tends to hallucinate more and predict misinformation. If your system needs complex context or memory management, it is better to integrate memory management solutions like mem0.ai into your system.System Prompt

Prompts are the instructions or inputs we provide to an LLM to guide its behavior. The system prompt is the primary, initial instruction given to the model and defines how it should behave throughout the interaction.

System prompts are typically used to set the model’s role, tone, constraints, and high-level rules. Because they have the highest priority when compared to other prompts, system prompts play a critical role in shaping consistent and predictable behavior in LLM-based applications.

Integration Methods

Using APIs

This is the simplest and easiest method to interface with any LLM. Large AI companies like OpenAI and Google provide APIs for their LLMs. There are also 3rd party inference providers like Groq that allow us to interface with a long list of LLMs.

Choosing what API to use completely depends on your use case and budgets. My LLM API of choice is Google’s Gemini API. This is simply due to 2 very important factors:

You can get a free API key without any payment information.

The free tier for the API is generous.

The large context size of 1 million+ tokens.

Google AI Studio, which is an online playground that allows you to test out different Gemini models before you actually begin using the API.

Let’s write code

Each major inference provider tends to provide their own libraries for interfacing with their APIs, but the OpenAI client and the OpenAI API are industry standards. Every major inference provider makes their APIs compatible with the OpenAI client. In fact, Google itself allows you to use the OpenAI client to interface with the Gemini models. We will also be using the OpenAI client in this blog example.

Let’s begin by creating a new Python project and by installing the OpenAI client library using:

uv add openai

You can obtain a Gemini API key for free from Google AI Studio. After you obtain the API key, securely put in the environment variables under the name “GEMINI_API_KEY“. Then, we can initialize the client using:

from openai import OpenAI

client = OpenAI(

api_key=os.environ.get("GEMINI_API_KEY"),

base_url="https://generativelanguage.googleapis.com/v1beta/openai/"

)

We will be using the API to generate some synthetic data we will use to finetune our custom model later on. We will be creating a entity and relationship dataset. For that, we will write the prompt as follows:

PROMPT = """

You are a synthetic data generation model that extracts entities and relationship triplets from sentences. Generate a JSON list of inputs and outputs in the following format:

{

"input": "Mukul is working on deploying the Mattermost server on AWS."

"output": {

"entities": ["Mukul", "Mattermost", "AWS"],

"relationships": [

["Mukul", "DEPLOYS", "Mattermost"],

["Mattermost", "DEPLOYED_ON", "AWS"]

]

}

}

Generate only the json and nothing else. You are part of a larger pipeline and it will break if you generate anything other than the json.

Do not generate the example I provided

generate as many synthetic data pairs as you can

"""

There are many models you can choose from, but since this is a simple task and we are prioritizing speed, I will be using the lightweight Gemini 2.5 Flash Lite model. We can create a simple function to generate the content based the prompt:

def generate_content():

response = client.chat.completions.create(

model="models/gemini-flash-lite-latest",

messages=[

{

"role": "user",

"content": PROMPT

}

]

)

return response.choices[0].message.content

To better understand the different method in the OpenAI client, please refer to the official documentation.

Running Locally Using Ollama

Another popular method to interfacing with LLMs is using Ollama to run these models locally. Ollama is an open source platform that allows you to run AI models locally without relying on cloud based providers. Ollama can easily be downloaded from their website and you can choose from a large number of models. These models are not just limited to LLMs, you can even play around with VLMs, Embedding models, etc.

Ollama can be used directly from the terminal, or you may use Ollama to serve an OpenAI compatible server locally.

Ollama is fine if you only want to use AI models in your local machine, but it is not suitable for production level workflows. Keep reading if you want to know how we can serve LLMs from our own servers on a production level.

Finetuning an LLM

While most base models work great and system prompts are generally enough to tweak the outputs of an LLM, sometimes we do need these models to behave in a very specific way, have some domain specific knowledge, or to generate output in a hyper specific format. This is where model finetuning comes into play.

Do note that in most cases, finetuning is not necessary at all. Refer to this article to know if finetuning is needed for you or not: FAQ + Is Fine-tuning Right For Me?

Efficient Finetuning using LoRA

LLMs are really large. They have a huge number of parameters and it is virtually impossible for many of us to completely finetune an LLM given the cost and resource constraints. But, we can use serveral PEFT (Parameter Efficient Fine Tuning) methods to make LLM finetuning fast and feasible for us.

One of the most famous PEFT methods is to train LoRA (Low Rank Adaption) adapters. Without going into the details, LoRA adapters are basically like LEGO bricks that go on top of your existing LLMs. They allow us to just train a handful of parameters to essentially finetune our LLM.

Hugging Face and the transformers library

Hugging Face is a platform and community that provides access to thousands of pretrained models, datasets, and tools for natural language processing and AI applications. It allows developers to easily download, share, and experiment with models like LLMs.

The Transformers library, developed by Hugging Face, is a Python toolkit that simplifies working with these models. It provides an interface for tokenization, model loading, inference, and fine-tuning, making it easy to integrate large language models into systems. It also supports PEFT techniques like LoRA.

Let’s write code

If you plan on finetuning LLMs on CUDA enabled devices or on Google Colab, I would highly recommend you use a framework like Unsloth, which provides quantized models for faster finetuning. Since my device does not support CUDA, I will be just the bare transformers library.

Begin by creating a new Python project and installing all required libraries:

uv add accelerate datasets peft transformers torch trl

After installing, let us first make all needed imports

import torch

from datasets import Dataset

from transformers import (

AutoTokenizer,

AutoModelForCausalLM,

)

from peft import LoraConfig, PeftModel

from trl import SFTTrainer, SFTConfig

import json

We will be finetuning a relatively small model, Gemma 3 1b on the synthetic dataset we generated earlier.

Sample of the dataset:

[

{

"input": "The new processor manufactured by Intel significantly improves the performance of the latest Macbook Pro.",

"output": {

"entities": [

"processor",

"Intel",

"Macbook Pro"

],

"relationships": [

[

"processor",

"MANUFACTURED_BY",

"Intel"

],

[

"processor",

"IMPROVES_PERFORMANCE_OF",

"Macbook Pro"

]

]

}

}

]

The dataset contains 5000 input output pairs in this format. But, we need to first format this dataset in an instruction format if we intend on finetuning a model with this. We can format the this data into a proper format and convert it into a usable dataset like this:

with open("entities.json", "r") as f:

raw_data = json.load(f)

processed_data = []

for item in raw_data:

input_text = item.get("input", "")

output_json = item.get("output", {})

response_str = json.dumps(output_json, ensure_ascii=False)

full_text = (

f"Extract entities and relationships from the text below as JSON.\n\n"

f"Input: {input_text}\n\n"

f"JSON Output:\n{response_str}<eos>"

)

processed_data.append({"text": full_text})

dataset = Dataset.from_list(processed_data)

len(dataset), dataset[0]

Then, we will select a device to train on using simple conditions and also put the model name and output directory name in respective variables.

MODEL_ID = "google/gemma-3-1b-it"

OUTPUT_DIR = "gemma-ner-lora"

if torch.backends.mps.is_available():

device = "mps" # Apple Silicon

torch_dtype = torch.bfloat16

elif torch.cuda.is_available():

device = "cuda" # NVIDIA

torch_dtype = torch.bfloat16

else:

device = "cpu"

torch_dtype = torch.float32

print(f"Using device: {device}") #mps in my case

Then, we will load the tokenizer, and the LLM. If this is your first time running this piece of code, the models will be downloaded from Hugging Face Hub and will take some time.

tokenizer = AutoTokenizer.from_pretrained(MODEL_ID)

model = AutoModelForCausalLM.from_pretrained(

MODEL_ID,

torch_dtype=torch_dtype,

device_map=device,

use_cache=False # Disable cache for training

)

We will also define the PEFT configurations by setting the alpha and rank values for the LoRA adapters along with the modules we will need to target. These target modules differ by models, so do refer to the finetuning documentation for specific models.

peft_config = LoraConfig(

r=16,

lora_alpha=32,

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM",

target_modules=[ # Gemma specific target modules

"q_proj",

"k_proj",

"v_proj",

"o_proj",

"gate_proj",

"up_proj",

"down_proj"

]

)

Then, finally we will define the training arguments and begin training

training_args = SFTConfig(

output_dir=OUTPUT_DIR,

dataset_text_field="text",

max_length=512,

packing=False,

# Standard Training Args

per_device_train_batch_size=2,

gradient_accumulation_steps=4,

learning_rate=2e-4,

logging_steps=10,

max_steps=100,

save_strategy="no",

optim="adamw_torch",

)

trainer = SFTTrainer(

model=model,

processing_class=tokenizer,

train_dataset=dataset,

peft_config=peft_config,

args=training_args

)

trainer.train()

trainer.save_model(OUTPUT_DIR)

That’s it! The finetuning will begin now. Keep a look out on the loss and make sure it is decreasing. We can usually stop training once the loss is below 0.5, but this value may differ in your case.

Then, to check our fine tuned model, we will now load the tokenizer and the model again. This time, we will attach our LoRA adapters to the model as well.

model = AutoModelForCausalLM.from_pretrained(

MODEL_ID,

torch_dtype=torch.bfloat16,

device_map="auto"

)

model = PeftModel.from_pretrained(model, OUTPUT_DIR)

tokenizer = AutoTokenizer.from_pretrained(MODEL_ID)

Then, we can check the model inference by using the transformers library itself

test_input = "Mukul is writing a blog on how to train LLMs and he will be using vLLM for inference."

prompt = f"Extract entities and relationships from the text below as JSON.\n\nInput: {test_input}\n\nJSON Output:\n"

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

outputs = model.generate(

**inputs,

max_new_tokens=200,

do_sample=True,

temperature=0.1

)

generated_tokens = outputs[0][inputs["input_ids"].shape[-1]:]

print(tokenizer.decode(generated_tokens, skip_special_tokens=True))

I have chosen a very low temperature (0.1) to ensure the model outputs are consistent over multiple inferences.

Output:

As you can see, the LLM is not capable of outputting entities and their relationships in the format we specified earlier.

Create an Inference Server

Well now you understand how to run LLMs locally and you have also finetuned your own LLM. You now need to serve it to other people through your very own inference service.. LLMs are very resource hungry and it is difficult to optimize them for parallel inference, which is crucial in web servers.

To mitigate this complexity, we will be using a very simple and easy to use tool call vLLM. vLLM is an open‑source inference and serving engine for large language models (LLMs) designed to make running these models fast, efficient, and scalable on your own hardware. vLLM is very easy to use and can be used directly from the command line. Just a single command spin up a production ready OpenAI compatible inference server.

You can very easily install vLLM on your device by following instruction on the official documentation.

Now, we can serve our finetuned Gemma 1b model by running just a single command:

vllm serve MODEL_NAME \

--enable-lora \

--lora-modules IDENTIFIER=ADAPTER_PATH \

--tokenizer TOKENIZER_PATH

In our case, we can serve the Gemma 3 1b model along with the LoRA adapters as such:

Then, you can find a fully OpenAI compatible server running on localhost:8000. To test if the model is being served correctly, we can use the OpenAI client once again.

from openai import OpenAI

client = OpenAI(base_url="http://localhost:8000/v1", api_key="EMPTY")

test_input = "This is a demo of how vLLM can be used to serve LLMs easily."

raw_prompt = f"Extract entities and relationships from the text below as JSON.\n\nInput: {test_input}\n\nJSON Output:\n"

response = client.completions.create(

model="ner",

prompt=raw_prompt,

max_tokens=200,

temperature=0.1

)

print(response.choices[0].text)

After running this code, you can see the model is being served as expected, and we are able to interact with it using the OpenAI client.

Conclusion

Congratulations! You have reached the end of this blog post. I hope that this post was helpful to you. If you hope to read more posts like this, consider following me on Hashnode, and joining the newsletter.

If you have any queries or suggestions, please leave a comment or reach out to me directly by sending an email to mukul.development@gmail.com.